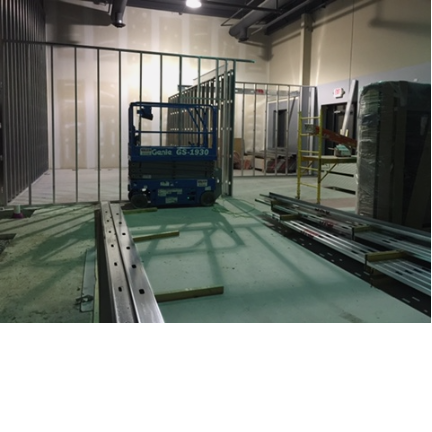

Data Center Build - Construction Begins

It's official, we're done with the initial architecture phase and building of walls and mezzanine structure has begun. The smaller room on the right will be our electrical and UPS room, and the room to the left is our server room. We'll have capacity for around 20 racks, although some will be used for electrical PDU and inRow cooling units.

Infrastructure Plans

Now I know what everyone is thinking at this point, what are we using for HVAC, electrical etc? Let us share with you guys what it is we're building here.

- Electric - 3 onsite transformers, two pre-existing and 1 brand new transformer with our own primary metering. Each transformer is 300 KVA for a total of 900 KVA, almost a full Megawatt.

- Backup - Each transformer is going to support 1 "pod". Each pod will be approximately 30x20'. Each pod will have an adjoining electrical room with about 150 KVA of UPS backup and 260 KVA of generator (Generac MP-260) backup.

- Cooling - Each POD will have 2 InRow Cooling units. Right now we're evaluating using either Liebert, APC or Stulz. Each provider have units that are capable of cooling approximately 40kw a piece. With two enclosed rows, we would have a total of 4 if higher density equipment is brought in, and 2 if we see a lower density more consistent with other colocation facilities we toured.

- Network - Our network will also be redundant. We will be using white-box switches that are 10gGB switches with 48 ports. We will be starting with a 10GB Core and Aggregation layer but down the road will be upgrading our core to 40GB to prevent over saturation of the core layer. We will be running Cumulus Linux on-top of Edge-Core Switches (AS5712-54X). These switches use Trident II chipset from Broadcom and are CRITICAL for some of the functionality in Cumulus. Secondly, they are x86 based so it opens up a larger list of linux based items that will run on this white-box switch.

POSTED BY Joshua Holmes IN GENERAL ON